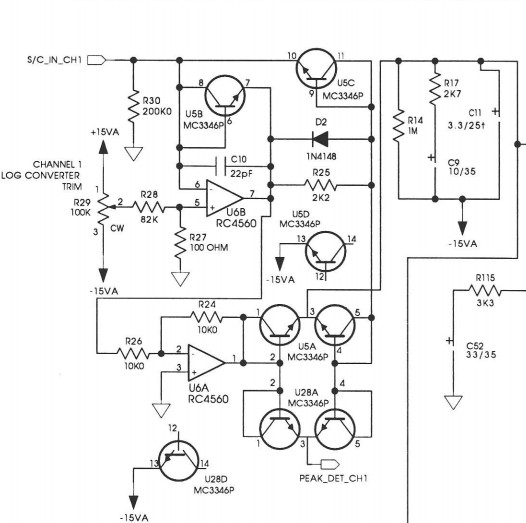

Yup, the dirty secret about precision rectifiers is how they suffer from loop gain. I recall when I first fired up my prototype TS-1 with standard precision rectifier, I was reading a measurement noise floor well below -100 dB. But before patting myself on the back, I figured out I was seeing a measurement error from less than 20 kHz bandwidth. After my best effort I was still getting bandwidth error below -50 dBu. IIRC -60dB was -3dB at 10kHz, -70dB was -3dB @ 5kHz etc. LF and midband noise still reported accurately.

Later I thought of using the rectifier output to drive a gain element so I could effectively compress the input to the rectifier 2:1. This way my -3dB point at 20 kHz and -50dB would map out to - 100 dB.

There was also benefit from the compression at high level, since I was getting a fraction of a dB error at +20 dBu from the logging transistor's real resistance Rbb. Distortion in this measurement path was not critical, only needed good gain accuracy so I could have used a cheap OTA (or low performance VCA, since I already had a log voltage available inside TS-1).

But nowadays, I'd do it all with a $2 micro... cheaper, better, yadda yadda...

JR

PS: I've seen some other Buff circuits and he was doing pretty slick stuff in the log domain... It's kind of a shame he bailed from the audio business, but he has apparently done well with photography (strobes) and avoided all the competition and price compression we suffered in the audio business. While I expect photography was not a free ride.

Cancel the "cancel culture", do not support mob hatred.